The NeRSemble 3D Head Avatar Benchmark

based on the data from

NeRSemble: Multi-view Radiance Field Reconstruction of Human Heads

SIGGRAPH 2023 [Paper]

Introduction

Benchmark v1 (2025)

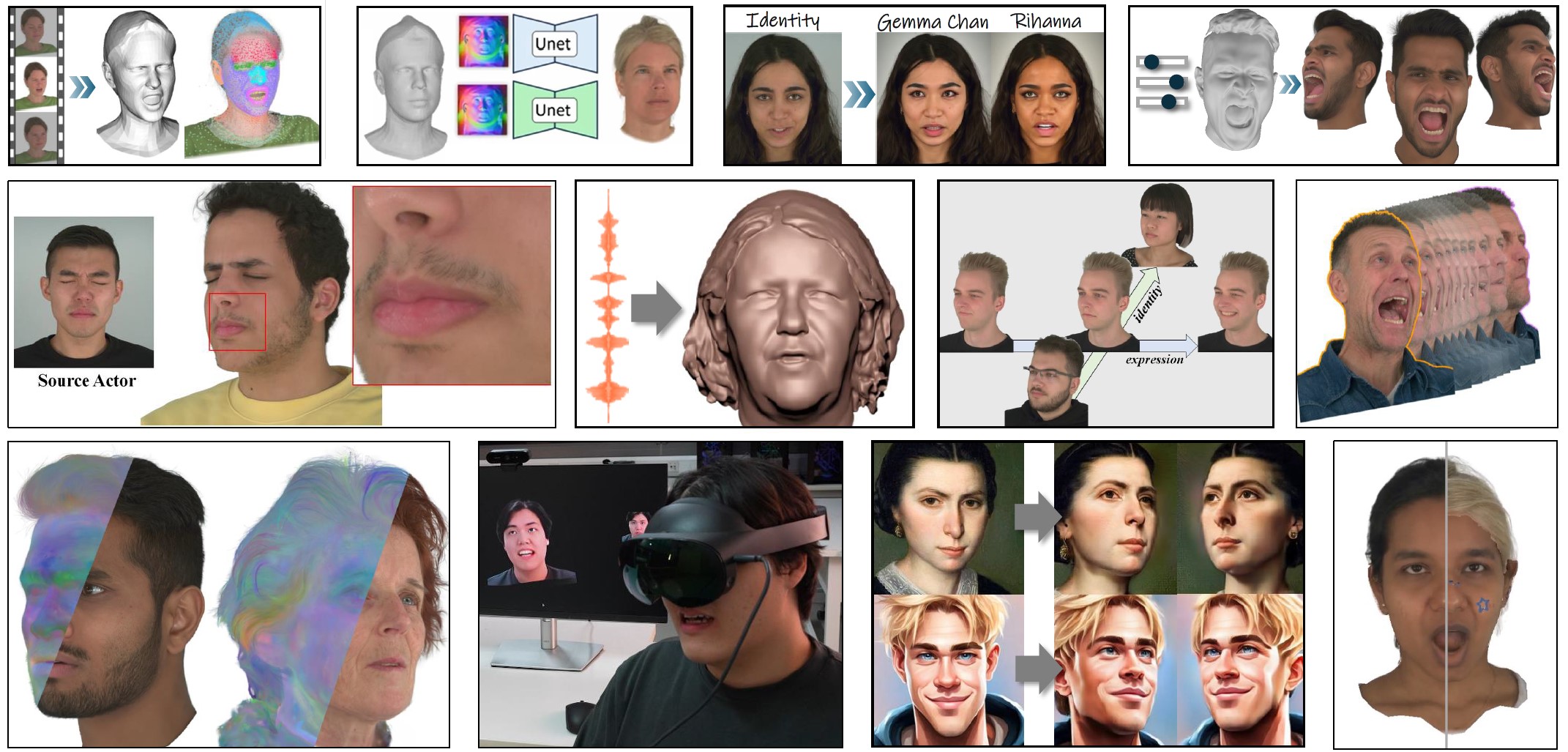

The NeRSemble benchmark aims to make research for photorealistic 3D head avatars more comparable.

The benchmark studies distinct phenomena of 3D head avatar creation, such as extreme facial expressions, slow motion captures of shaking long hair, or complicated light reflection and refraction patterns of glasses.

To this end, v1 of the benchmark introduces two tasks: Dynamic Novel View Synthesis on Heads and FLAME-driven Monocular Head Avatar Reconstruction.

These tasks assess two core desiderata of 3D avatars: While the novel view synthesis challenge focuses on best possible rendering quality of complex moving scenes, the avatar animation challenge is concerned with how well a driving signal is translated into an avatar.

Dynamic Novel View Synthesis.

The first task, dynamic novel view synthesis, is particular interesting for digital humans where the bar for a free viewpoint video to be perceived as ”real” is exceptionally high due to human sensitivity to faces. Human heads constitute an excellent playground to benchmark dynamic novel view synthesis methods, as many complex physical phenomena such as topological changes, light reflections and refractions, sub-surface scattering, or fast movements of thin structures can be studied in a very controlled setting. In the benchmark, 13 synchronized video streams for 5 challenging human head sequences will be provided to reconstruct a high-quality dynamic 3D representation.

FLAME-driven Monocular Head Avatar Reconstruction.

On the other hand, the monocular FLAME avatar challenge poses an under-constrained setting as data from only a single frontal camera is provided, mimicing the use-case of a user casually creating an avatar of themselves with their phone. Here, the focus lies on re-animating the avatar with unseen expressions and rendering it from unseen views. In the interest of comparability, we restrict the driving signal to FLAME expression codes which currently is a popular choice for animating a 3D head avatar. The benchmark dataset provides high-quality FLAME trackings that were obtained by fitting the 3D face model to accurate 3D point clouds reconstructed from all 16 camera views.

For all benchmark tasks, evaluation is performed on hold-out camera viewpoints that are not part of the published benchmark data.

Dynamic Novel View Synthesis.

The first task, dynamic novel view synthesis, is particular interesting for digital humans where the bar for a free viewpoint video to be perceived as ”real” is exceptionally high due to human sensitivity to faces. Human heads constitute an excellent playground to benchmark dynamic novel view synthesis methods, as many complex physical phenomena such as topological changes, light reflections and refractions, sub-surface scattering, or fast movements of thin structures can be studied in a very controlled setting. In the benchmark, 13 synchronized video streams for 5 challenging human head sequences will be provided to reconstruct a high-quality dynamic 3D representation.

FLAME-driven Monocular Head Avatar Reconstruction.

On the other hand, the monocular FLAME avatar challenge poses an under-constrained setting as data from only a single frontal camera is provided, mimicing the use-case of a user casually creating an avatar of themselves with their phone. Here, the focus lies on re-animating the avatar with unseen expressions and rendering it from unseen views. In the interest of comparability, we restrict the driving signal to FLAME expression codes which currently is a popular choice for animating a 3D head avatar. The benchmark dataset provides high-quality FLAME trackings that were obtained by fitting the 3D face model to accurate 3D point clouds reconstructed from all 16 camera views.

For all benchmark tasks, evaluation is performed on hold-out camera viewpoints that are not part of the published benchmark data.

Benchmark v2 (2026)

The v2 of the NeRSemble benchmark introduces the new Single-view 3D Face Reconstruction task and provides an update to the FLAME-driven Monocular Head Avatar Reconstruction task.

Single-view 3D Face Reconstruction.

This challenge measures two important aspects of 3D avatar creation: (i) Geometric detail of the reconstructed 3D face, and (ii) the ability to disentangle expression from identity. This is done by evaluating the accuracy of reconstructed 3D meshes given a single image of a person in two subtasks:

FLAME-driven Monocular Head Avatar Reconstruction (v2).

The underlying FLAME tracking that is used for both avatar creation from the train videos and avatar driving on the test sequences has been improved. The updated tracking features much more stable torso tracking, more expressive lip closures during speech, and more accurate mouth tracking for challenging facial expressions. To ensure comparability, a separate leaderboard has been setup that only includes methods that had access to the updated tracking. From now on, submissions are only possible to v2 of the task.

Single-view 3D Face Reconstruction.

This challenge measures two important aspects of 3D avatar creation: (i) Geometric detail of the reconstructed 3D face, and (ii) the ability to disentangle expression from identity. This is done by evaluating the accuracy of reconstructed 3D meshes given a single image of a person in two subtasks:

- Posed Reconstruction: To measure (i) geometric detail, a 3D mesh of the person's head has to be reconstructed showing the exact same facial expression as the input image.

- Neutral Reconstruction: To measure (ii) identity-expression disentanglement, a 3D mesh of the same person in neutral expression has to be reconstructed.

FLAME-driven Monocular Head Avatar Reconstruction (v2).

The underlying FLAME tracking that is used for both avatar creation from the train videos and avatar driving on the test sequences has been improved. The updated tracking features much more stable torso tracking, more expressive lip closures during speech, and more accurate mouth tracking for challenging facial expressions. To ensure comparability, a separate leaderboard has been setup that only includes methods that had access to the updated tracking. From now on, submissions are only possible to v2 of the task.

Download the benchmark data

The benchmark data is based on the NeRSemble dataset.

To download the benchmark data, please follow the steps in our NeRSemble benchmark github repo. You will first have to request access to the NeRSemble dataset via this form. Once your application is approved (typically within 1 day), you can use the convenient download scripts in the repo to obtain the benchmark data.

To download the benchmark data, please follow the steps in our NeRSemble benchmark github repo. You will first have to request access to the NeRSemble dataset via this form. Once your application is approved (typically within 1 day), you can use the convenient download scripts in the repo to obtain the benchmark data.

Benchmark Tasks

Citation

If you use the NeRSemble benchmark data or code please cite:

@article{kirschstein2023nersemble,

author = {Kirschstein, Tobias and Qian, Shenhan and Giebenhain, Simon and Walter, Tim and Nie\ss{}ner, Matthias},

title = {NeRSemble: Multi-View Radiance Field Reconstruction of Human Heads},

year = {2023},

issue_date = {August 2023},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {42},

number = {4},

issn = {0730-0301},

url = {https://doi.org/10.1145/3592455},

doi = {10.1145/3592455},

journal = {ACM Trans. Graph.},

month = {jul},

articleno = {161},

numpages = {14},

}License

The NeRSemble benchmark data is released under the same Terms of Use as the NeRSemble dataset, which you can have to agree to before access is granted.